No code or download required!

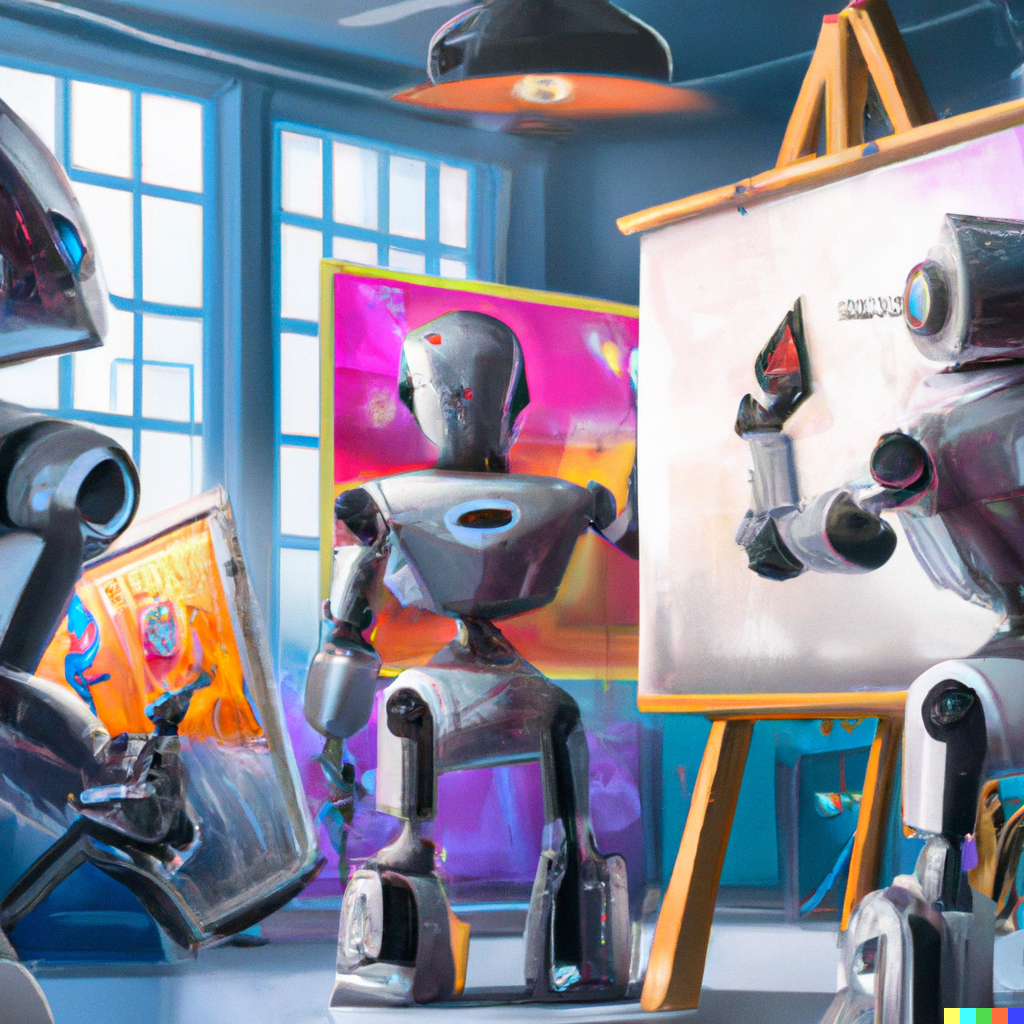

Text-to-image technology uses AI to understand your words and transform them into a unique image. AI art generators, available to the public open a new era, changing the creative process and expanding the very idea of what it means to be an artist. Anyone can make art now!

Let’s take a look at the 3 AI art generators to try today. No code or download required! For some, even a registration is optional.

Stable Diffusion

“Stability AI puts the power back into the hands of developer communities …” ~ Emad Mostaque, founder and CEO of Stability AI.

A free, open-source text-to-image generator.

Text-to-image functionality is available without registration and ready to use immediately and without any restrictions either on prompts or on a number of images. Stable Diffusion does not collect and use any personal data, nor they store your prompts or images. So make sure to save your creations!

Images created through Stable Diffusion Online are fully open source, explicitly falling under the CC0 1.0 Universal Public Domain Dedication.

Stable Diffusion AI is best used for generating aesthetically pleasing photos and designs from short prompts. It is also good for clear and accurate close-ups of faces (including famous people).

Website: Stable Diffusion

Who: collaboration of Stability AI, CompVis LMU, and Runway with support from EleutherAI and LAION.

Repository: https://github.com/Stability-AI/stablediffusion

Written in: Python

Type: Latent diffusion model

Dataset: 2b English language label subset of LAION 5b

Dall-E 2

“OpenAI’s mission is to ensure that artificial general intelligence (AGI)—by which we mean highly autonomous systems that outperform humans at most economically valuable work—benefits all of humanity”

A limited free AI art generator created by OpenAI. The most versatile art generator so far. It creates images from text captions for a wide range of concepts expressible in natural language, understands hyper-specific details and lengthy prompts. It is extremely creative and at the same time obedient to the prompt.

Dall-E 2 uses a training version of the GPT-3 transformer model with a 12-billion parameter to interpret natural language input and generate corresponding images. Since Dall-E 2 was trained on photostock pictures, it is best used for stock-like images or outputs that require adherence to lengthy prompts and complex details. It has biases and limitations inherited from stock content: underrepresentation of some topics (Australiana being the one), gender and ethnic biases, etc.

To use Dall-E 2, you need to register with an email and phone number. After that, you will receive 50 credits for the first month. One credit equals one prompt. 15 free credits are available each month, or you can buy additional credits.

Web capabilities of Dall-E 2 include text-to-image generations, inpainting and outpainting, which uses context from an image to fill in missing areas using a medium consistent with the original, following a given prompt.

Website: Dall-E 2

Who: OpenAI

Type: Transformer language model

Dataset: Shutterstock images (most likely with some other datasets)

Midjourney

“Beautiful by default”

Trialware, proprietary text-to-image art generator.

Midjourney is all about aesthetics. Its images are crisp, painterly and very appealing, with creative use of light and shadow, adherence to colour theory and eye-pleasing composition. Midjourney’s style can best be described as a fusion of a highly realistic painting, CGI, and photography.

At the time of writing (Jan 2023), it is only available through a Discord bot. To generate an image, use the /imagine command and enter a text prompt. As a free/trial user, you will interact with a bot in a public chat room where everyone else is generating images. So, it can be a frantic experience.

Website: Midjourney

Who: independent research lab founded by David Holz, co-founder of Leap motion.

Type: no official statements about the exact methods and models

Dataset: non known, may include copyrighted artists’ works